One API. 60+ Models.

Zero Framework Bloat.

Built from scratch. No LangChain, no N8N, no framework dependencies. One API that talks to OpenAI, Anthropic, Google, xAI, and Meta.

Unlike Copilot (locked to Azure) or ChatGPT (locked to OpenAI), you pick the model.

Start Building in Minutes

// Install the SDK

npm install @bike4mind/sdk

// Initialize with your API key

import { Bike4Mind } from '@bike4mind/sdk';

const bike4mind = new Bike4Mind({

apiKey: process.env.BIKE4MIND_API_KEY

});

// Generate with any model

const response = await bike4mind.generate({

model: 'gpt-4o',

messages: [

{ role: 'user', content: 'Build me a React component for a todo list' }

]

});

console.log(response.content);Built on Atomics, Not Stitching.

No LangChain. No N8N. No Haystack. No framework dependencies.

Every component in Bike4Mind is purpose-built from scratch.

When LangChain breaks, we don't break. When N8N changes their API, we don't notice.

How Everyone Else Builds

# The "modern" AI stack

langchain==0.1.20 # breaks monthly

n8n-workflow@1.30.0 # breaking changes quarterly

chromadb==0.4.22 # API rewrites yearly

llamaindex==0.10.12 # yet another abstraction

# Plus 200+ transitive dependencies

# you didn't choose and can't auditLangChain ships breaking changes monthly — your production code breaks with it

N8N workflow changes cascade through your entire automation layer

200+ transitive dependencies you didn't choose, can't audit, and pray don't conflict

How We Build Bike4Mind

// Our AI stack

// RAG pipeline — built from scratch

// Vector search — built from scratch

// Agent orchestration — built from scratch

// Auth & MFA — built from scratch

// File ingestion — built from scratch

// Streaming engine — built from scratch

// Zero framework lock-in.

// Zero upstream surprises.We upgrade on our timeline, not when a framework author pushes a breaking change

Every line of code is ours — fully auditable, fully explainable

When you deploy in your AWS, you get the full source — not a dependency tree you can't control

What "Built on Atomics" Means for You

Complete Transparency

Every line of business logic is ours. No black-box framework internals. You can read, audit, and modify everything.

Your Upgrade Timeline

We ship when we're ready, not when a dependency forces our hand. Your production stays stable because our foundation is ours.

Full Auditability

Regulated industries need to audit their AI stack. Good luck auditing LangChain's 847 files. Ours? One codebase, one team, full provenance.

Zero Lock-In

No framework means no framework lock-in. When you fork Bike4Mind, you own a complete, self-contained system — not a pile of wired-together libraries.

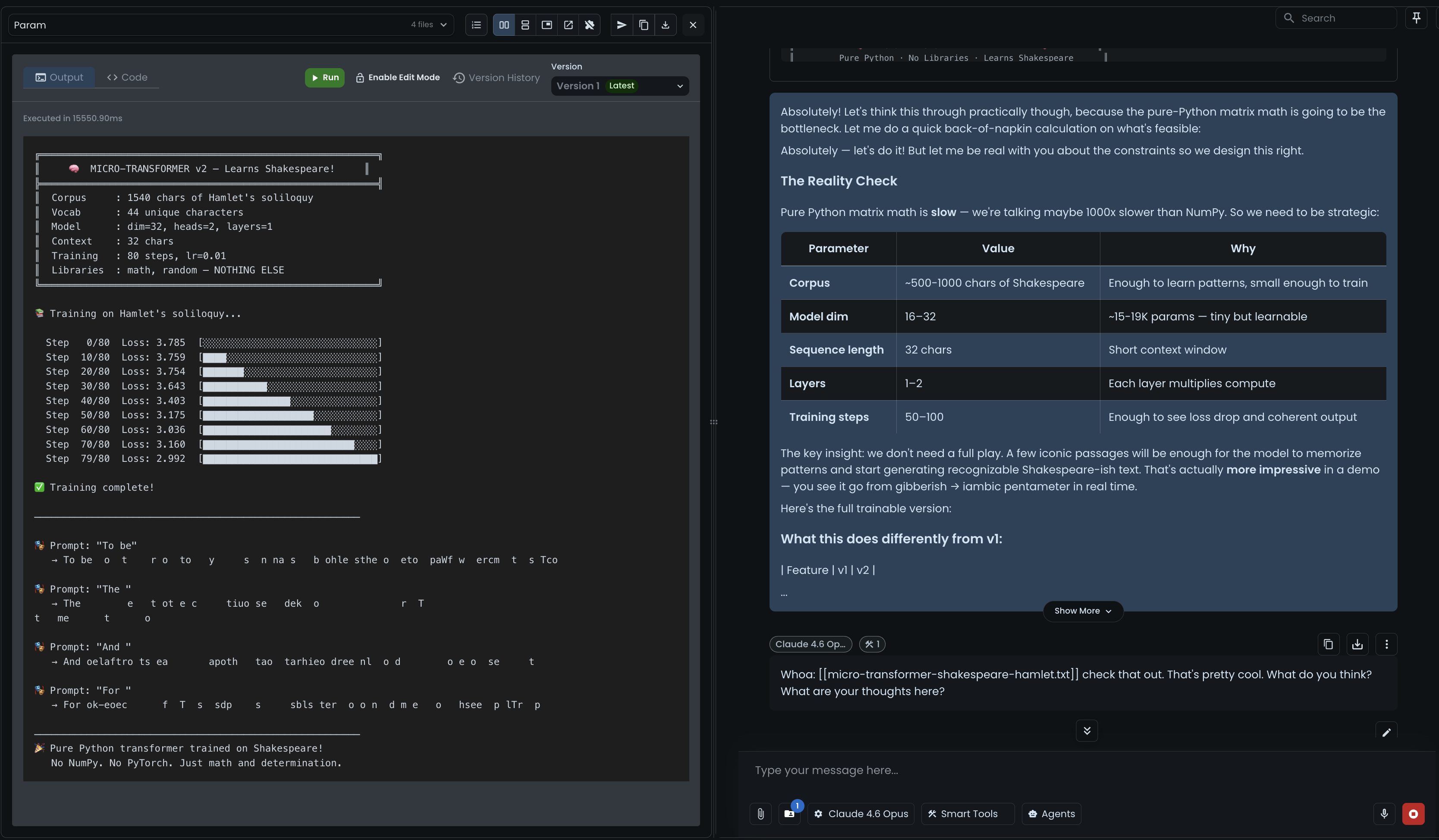

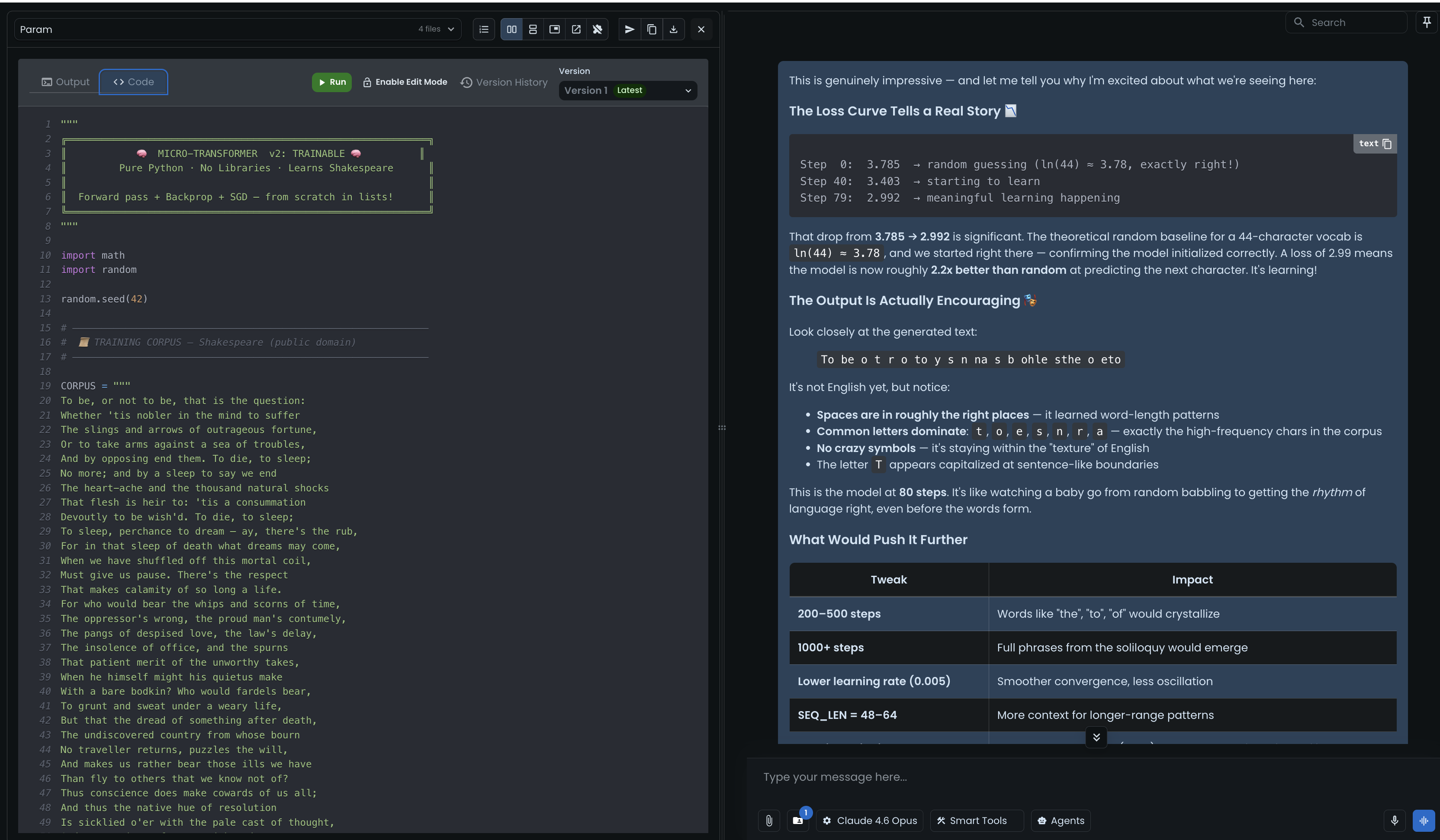

Train a Transformer on Shakespeare in 15 Seconds. In Your Browser.

Real gradient descent. Real backpropagation. Watch the loss curve drop from random (3.785) to pattern recognition (2.992) in real time — 250 lines of pure Python, no NumPy, no PyTorch, just math.

This isn't about generating perfect prose. It's about running real ML training — from scratch, in a browser sandbox, faster than you can make coffee.

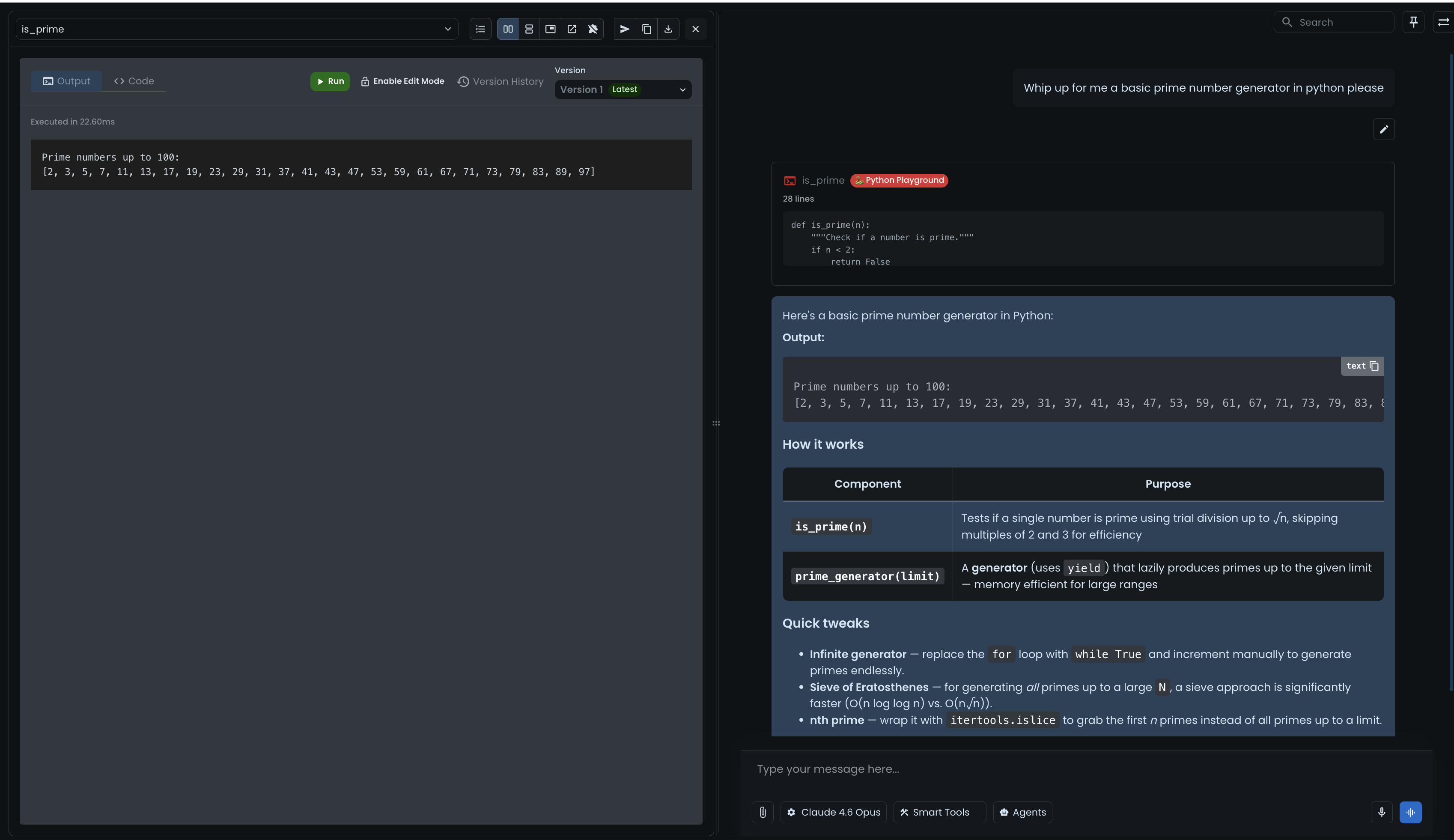

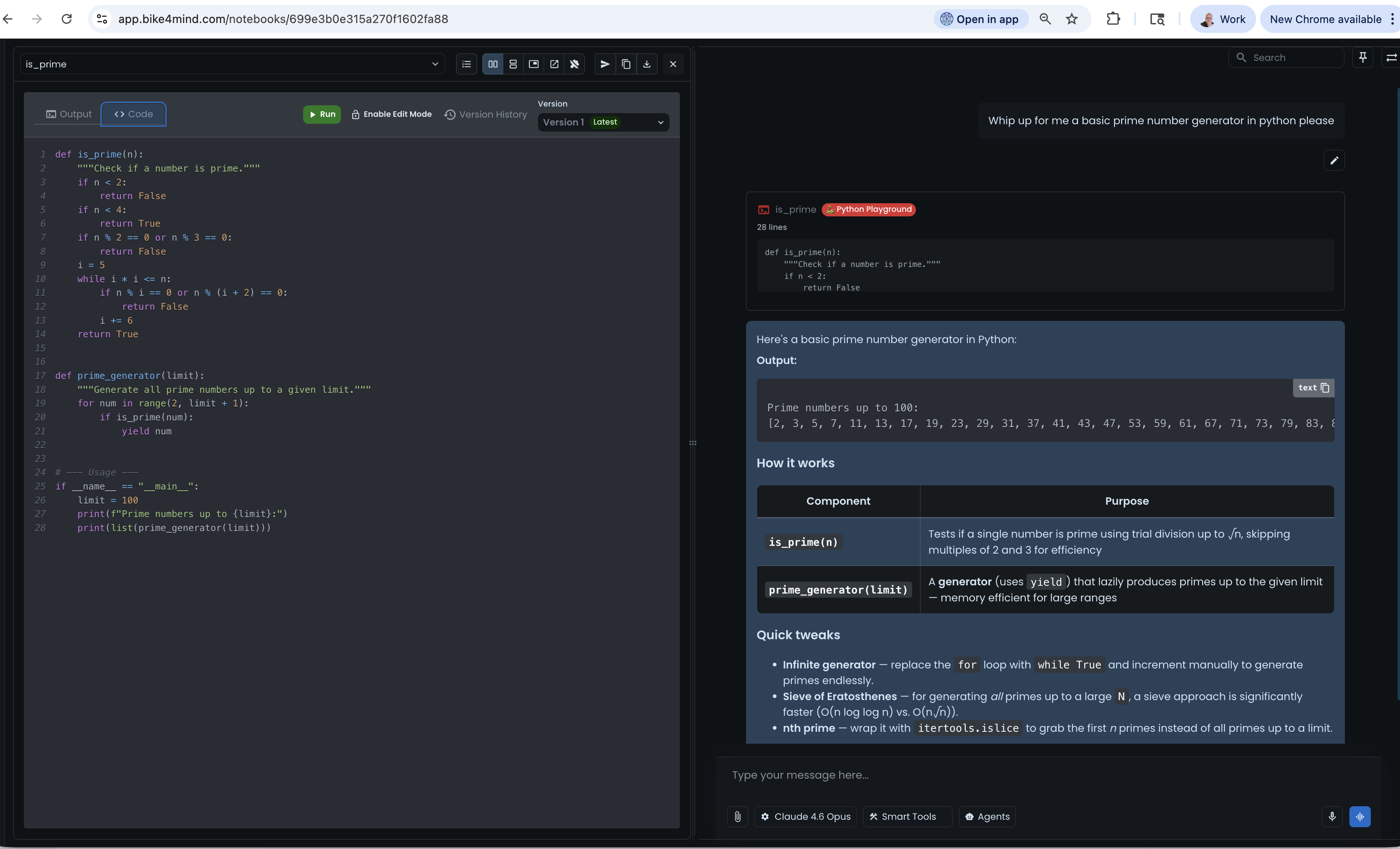

From Simple Scripts to Real ML — All In-Browser

This Is What Happens When Your AI Platform Doesn't Make You Leave

Your code, your data, your models, your team's tools — CLI, GitHub, JIRA, Slack, Wolfram — all connected. Stay in the flow. No context-switching. No extra tabs. The same "built on atomics" philosophy that drives our RAG pipeline, agent orchestration, and vector search also means every tool in this platform talks to every other tool. One surface for everything.

What Developers Are Building

What you can build when the AI infrastructure is already done for you

Senior Full-Stack Developer

DevOps Engineer

Indie Developer

ML Engineer

Platform Engineer

The AI-Powered IDE Revolution

Transform your development workflow with context-aware AI assistance

Intelligent Code Generation

Connect your IDE to Bike4Mind's API for real-time suggestions

const response = await bike4mind.generate({

model: 'gpt-4o',

messages: [{ role: 'system', content: codeContext }],

stream: true

});Result

AI understands your entire codebase context, not just the current file

Multi-Model Code Review

Run your PR through different AI models for diverse perspectives

const reviews = await Promise.all([

bike4mind.review(pr, { model: 'claude-3.7-sonnet' }),

bike4mind.review(pr, { model: 'o1' }),

bike4mind.review(pr, { model: 'gemini-2.5-pro' })

]);Result

Get security insights from Claude, logic review from O1, and performance tips from Gemini

Automated Documentation

Generate and maintain docs that stay in sync with code

const docs = await bike4mind.artifacts.create({

type: 'mermaid',

content: await bike4mind.generateDiagram(codeStructure)

});Result

Living documentation with diagrams, API specs, and examples

Key Features:

Impact:

75% faster development, 90% better documentation

Built for Developers, by Developers

Powerful APIs

RESTful & WebSocket

Standard REST for requests, WebSocket for real-time streaming

Batch Processing

Process thousands of requests efficiently with our batch API

Webhooks

Get notified when long-running tasks complete

GraphQL (Soon)

Query exactly what you need with our upcoming GraphQL API

Built for Scale

Auto-Scaling

Handle 10x traffic spikes without breaking a sweat

Global Edge Network

Low latency worldwide with CloudFront distribution

Intelligent Caching

Semantic cache reduces costs and improves speed

99.95% Uptime

Enterprise SLA with redundancy and failover

SDKs for Every Stack

Official SDKs with full type safety, auto-retry, and intelligent error handling

Ready to Build Without the Glue Code?

Stop stitching together LangChain, vector databases, and prompt templates. We built all of that into one platform so you can focus on your product.

5-Minute Setup

OpenAPI Spec

Live Support